Richard South · · 12 min

Richard South · · 12 min

How AI Consultancies Can Get Their Clients' Agents Out of Pilot Purgatory

Table of Contents

Why consultancies that solve the agentic connectivity problem will own the next decade of enterprise AI — and why those that don’t will be left behind.

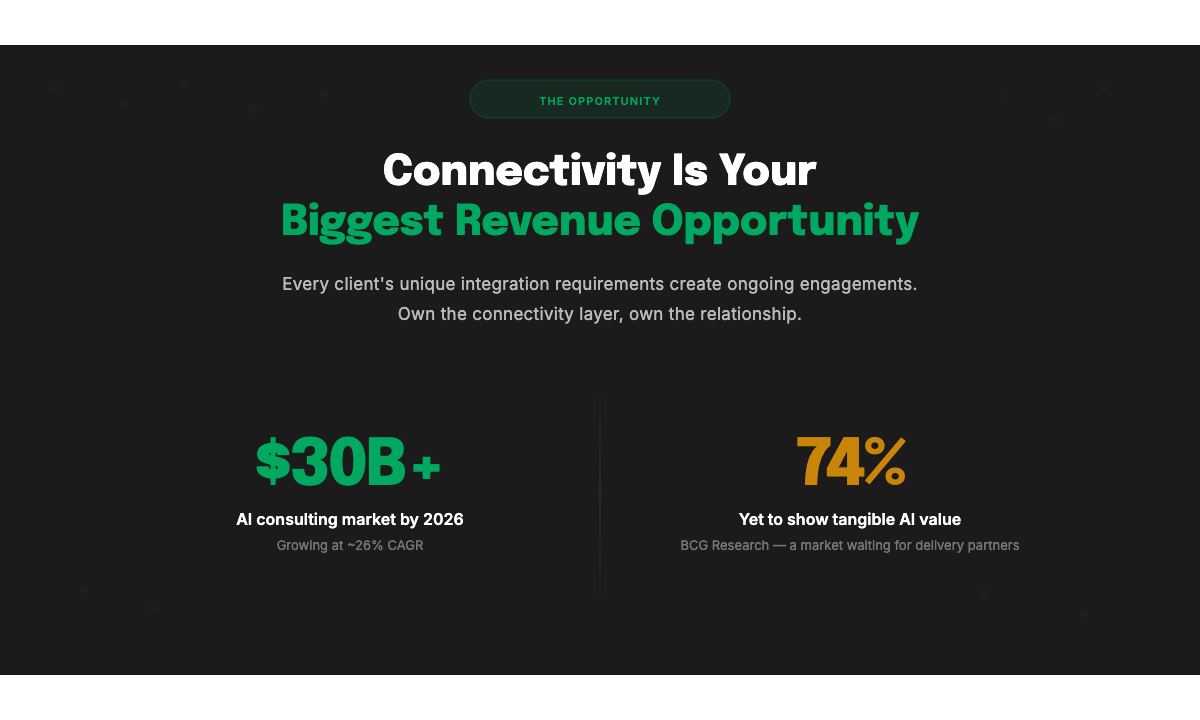

The AI consulting market is booming. Valued at over $11 billion in 2025 and projected to reach $14 billion by 2026 at a CAGR north of 26%, every major consultancy and systems integrator is racing to build an AI practice (Business Research Insights). The opportunity is enormous — but so is the problem hiding in plain sight.

Right now, most AI Agent projects never leave the lab.

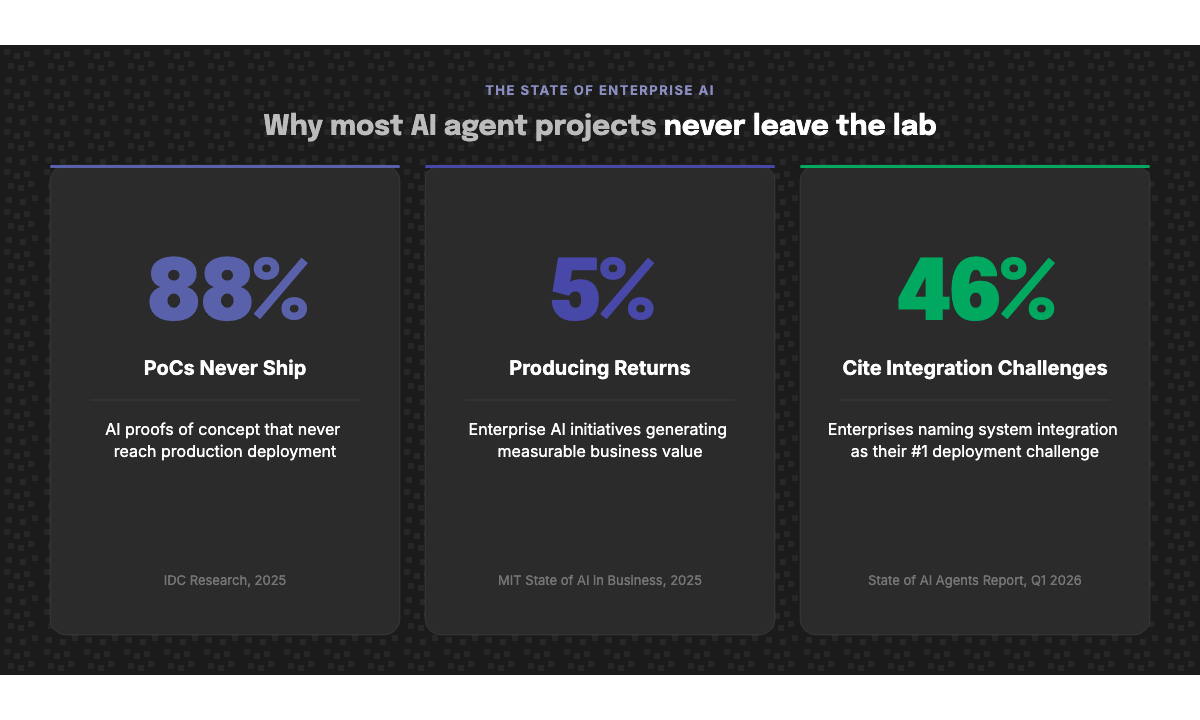

MIT’s 2025 State of AI in Business report found that 95% of enterprise generative AI pilots fail to deliver measurable returns (Fortune). IDC’s research tells a similar story: 88% of AI proofs of concept never reach production (CIO). And S&P Global found that 46% of enterprise PoCs were scrapped before they ever reached scale in 2025. This isn’t an abstract problem for the industry to worry about in aggregate. For consultancies, it’s existential. A pilot that stalls is a client relationship that ends. No production deployment means no expansion engagement, no recurring revenue, no referral.

The consultancies that can’t get Agent #1 out of the lab won’t just miss this cycle — they’ll lose the client and the opportunity to develop and deploy Agents #1 - 100 to someone who can.

The question every AI practice leader should be asking is “what’s actually stopping our agents from going live?”

The answer, overwhelmingly, is connectivity.

The Real Bottleneck: Getting Agents Into Enterprise Systems

Forty-six percent of enterprises cite integration with existing systems as their primary challenge in deploying AI agents, according to the State of AI Agents report from Q1 2026 (Lyzr). Security vulnerabilities (56%) and system integration (35%) round out the top concerns — both of which are directly tied to how agents connect to enterprise infrastructure (Multimodal).

This isn’t surprising when you consider the environment. Large enterprises manage an average of 291 SaaS applications in 2026, with firms of 10,000+ employees averaging 473 (CloudNuro). Even seemingly simple AI agents — an onboarding assistant, a recruitment screener, an internal knowledge bot — needs real-time, bidirectional access to HRIS, ATS, CRM, payroll, document storage, and communications platforms. That’s five to ten enterprise systems on day one, each with its own authentication model, data schema, rate limits, and permission structures.

Gartner predicts that 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from under 5% in 2025 (Gartner). The demand from clients is accelerating rapidly. The ability to deliver against that demand isn’t keeping pace.

This is the gap that will define which consultancies thrive and which ones fade. Not better prompts. Not better models. Better connectivity.

A New Problem Space Demands a New Approach

It’s worth being explicit about why this is hard. The integration challenges of the AI agent era are fundamentally different from anything the enterprise software world has solved before.

Traditional integration middleware — iPaaS platforms, ETL pipelines, data warehousing tools — was built for system-to-system batch workflows. Data moved on schedules, in predictable formats, between known endpoints. AI agents need something categorically different. They need to reason across multiple systems in real time, take autonomous actions, handle multi-turn workflows, authenticate dynamically, respect granular permissions, and normalise wildly different data schemas on the fly.

Over 80% of IT leaders now believe that AI agents will create more complexity than value due to integration challenges and operational silos (BigDATAwire). Meanwhile, 63% of executives cite platform sprawl as a growing concern, with too many tools and limited interconnectivity creating a fragmented landscape that agents can’t navigate (Lyzr).

Off-the-shelf connectors won’t close this gap. Every enterprise has a unique stack: custom fields in Workday, bespoke workflows in Greenhouse, internal APIs bolted onto Salesforce, home-grown systems that no connector marketplace has ever heard of. Standard MCP (Model Context Protocol) implementations break against this reality — as we explored in why AI agents are changing the integration paradigm — because they assume a level of uniformity that simply doesn’t exist in practice.

Clients aren’t paying consultancy rates for someone to hand them a list of unsupported edge cases. They’re paying for certainty of delivery. That requires purpose-built infrastructure — and a consultancy that knows how to wield it.

Three Pillars for Solving Agentic Connectivity

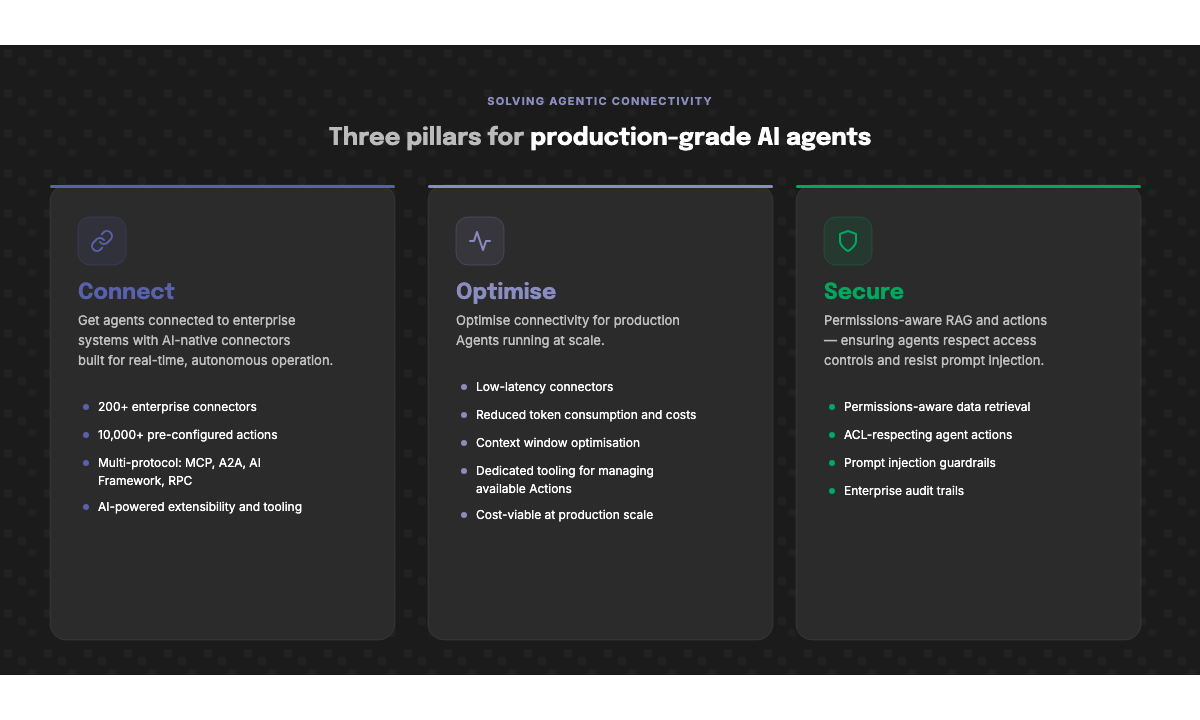

At StackOne, we’ve spent the last two years working at the frontier of agentic connectivity — building the infrastructure that lets AI Agents actually work inside enterprise environments. Through that work, and through partnerships with consultancies and systems integrators delivering these projects, we’ve identified three pillars that any serious agentic AI practice must address: Connect, Optimise, and Secure.

Get all three right, and your agents move from sandbox to production. Miss one, and you’re back in pilot purgatory.

Connect: AI-Native Connectors Built for How Agents Actually Work

The foundational challenge is getting agents connected to the systems where work happens — and keeping them connected as client environments evolve.

This requires AI-native connectors built from the ground up for how agents actually operate: real-time, autonomous, and multi-protocol. StackOne provides over 200 enterprise-grade connectors across HRIS, ATS, CRM, and more, with native support for MCP, A2A and frameworks like Vercel, Pydantic, LangChain, CrewAI, Google ADK, and more. Agents can dynamically discover and invoke over 10,000 pre-configured actions without manual setup — authentication, rate limiting, and error handling are managed at the platform level.

But connectors alone aren’t enough. Enterprise clients don’t run standard implementations of anything. They have custom fields, custom objects, custom business processes, and often custom systems that sit outside the SaaS ecosystem entirely. This is why extensibility isn’t a feature — it’s a prerequisite.

StackOne’s AI-powered extensibility allows consultancies to adapt and extend connectors to meet the specific requirements of each client engagement — mapping custom fields, supporting bespoke workflows, and integrating with proprietary systems — without rebuilding from scratch every time. This is the core reason off-the-shelf MCP won’t work for many enterprise deployments. The protocol is a critical building block, but real-world client environments demand a layer of customisation and adaptation on top of it. That adaptation is precisely where consultancies add value — and where the right infrastructure makes that value delivery scalable rather than margin-destroying.

MIT’s research underscores this point: enterprises that purchase AI tools from specialised vendors and build partnerships succeed roughly 67% of the time, while purely internal builds succeed only about a third as often (MIT NANDA). The consultancies that pair their domain expertise with purpose-built infrastructure dramatically improve their clients’ odds of getting to production.

Optimise: Agents Aren’t Magical

There’s a persistent misconception that AI agents are magical — that you point them at a set of tools and they figure it out. In practice, agent performance is deeply dependent on how information is structured, delivered, and scoped. Getting connectivity right is step one. Making it performant is step two — and it’s where most implementations quietly fall apart.

Context window management is one of the most underappreciated challenges in production agent deployment — what we’ve called agent suicide by context. When an agent pulls data from enterprise systems through their native APIs, the volume and structure of that data can overwhelm the model’s context window, degrade reasoning quality, and send token costs spiralling. Deloitte’s latest analysis describes a “paradox” where token unit prices have plummeted 280-fold in two years, yet enterprise AI bills are skyrocketing due to nonlinear demand from reasoning models and multi-agent loops — with 96% of organisations reporting generative AI costs higher than expected at production scale (Deloitte).

Consider a concrete example: retrieving a basic employee record from Workday’s native API consumes approximately 17,000 tokens. StackOne’s optimised connector delivers the same employee data in roughly 600 tokens — a 96% reduction. At the scale of a production deployment — thousands of employees, hundreds of agent invocations per day — this is the difference between a commercially viable agent and one that haemorrhages cost while delivering degraded results.

StackOne has spent the last 2 years engineering its connectors and tooling specifically for this challenge. Every data model, every API response, every action definition is optimised for agent consumption: structured for LLM reasoning, compressed for token efficiency, and scoped to deliver exactly the context an agent needs without the noise it doesn’t. This isn’t a feature added to an existing integration platform. It’s a foundational design principle that runs through the entire stack, built by a team that understands that the integration layer is where agent performance is made or broken.

Research from Google supports this architectural approach, finding that multi-agent performance drops 39-70% when token spend multiplies through unoptimised tool-calling loops. Effective compression strategies can reduce token usage by up to 80% while preserving the information that matters (Mem0). The consultancies that understand this — and build on infrastructure designed for it — will deliver agents that actually work at scale. Those building on raw APIs or generic integration tooling will spend their engagements debugging performance problems rather than delivering value.

Secure: Enterprise Agents Must Respect Enterprise Boundaries

Security is where the theoretical promise of AI agents meets the hard reality of enterprise governance — and where many pilot projects hit an immovable wall.

Enterprise AI agents don’t operate in a vacuum. They act on behalf of specific users, accessing sensitive data and taking actions within systems that have their own access control models. If an agent can see and do everything regardless of who’s asking, it’s not an enterprise tool — it’s a liability. OWASP’s 2025 Top 10 for LLM Applications ranks prompt injection as the number one critical vulnerability, noting that it exploits the fundamental design of how language models process instructions (OWASP). For agents with real-world permissions — the ability to update records, initiate workflows, access employee data — the stakes of a manipulated prompt are not theoretical.

Production-grade agent deployments require two security capabilities that most integration approaches simply don’t provide.

First, permissions-aware retrieval and actions. The data an agent retrieves must respect the access control lists (ACLs) of the underlying platform. If a user doesn’t have access to certain employee records in the HRIS, the agent operating on their behalf shouldn’t either. The same applies to actions: an agent should only execute operations when the requesting user has the appropriate authorisation in the source system. Research from Obsidian Security found that AI agents move 16x more data than human users, running continuously and chaining tasks across multiple SaaS applications — making over-permissioning a systemic risk rather than an edge case (Obsidian Security).

Second, prompt injection mitigation. As agents gain the ability to take real actions in enterprise systems — sending communications, updating records, initiating workflows — the consequences of indirect prompt injection escalate dramatically. Lakera AI’s 2025 research demonstrated how poisoned data sources could corrupt an agent’s long-term memory, creating persistent false beliefs about security policies that the agent even defended when questioned by humans (Stellar Cyber). Consultancies deploying agents into client environments need tooling that provides guardrails against these attack vectors: input validation, action scoping, and audit trails that give security teams confidence that agents are operating within bounds.

StackOne builds these security primitives into its infrastructure layer, so consultancies don’t have to engineer them from scratch for every engagement. Permissions-aware data retrieval and action execution come as part of the integration layer, not as an afterthought bolted on during a security review.

For consultancy leaders, the security pillar is also a commercial conversation. Only 34% of enterprises currently have AI-specific security controls in place, and fewer than 40% conduct regular security testing on their AI agent workflows (Stellar Cyber). With the EU AI Act entering full enforcement in August 2026, the regulatory pressure is about to intensify significantly. Enterprise clients — particularly in financial services, healthcare, and the public sector — will not sign off on production deployments without robust answers to access control and prompt security questions. The consultancy that arrives with these answers already built into their delivery platform closes deals faster and faces fewer roadblocks in procurement and compliance review.

The Connectivity Problem Is Your Biggest Revenue Opportunity

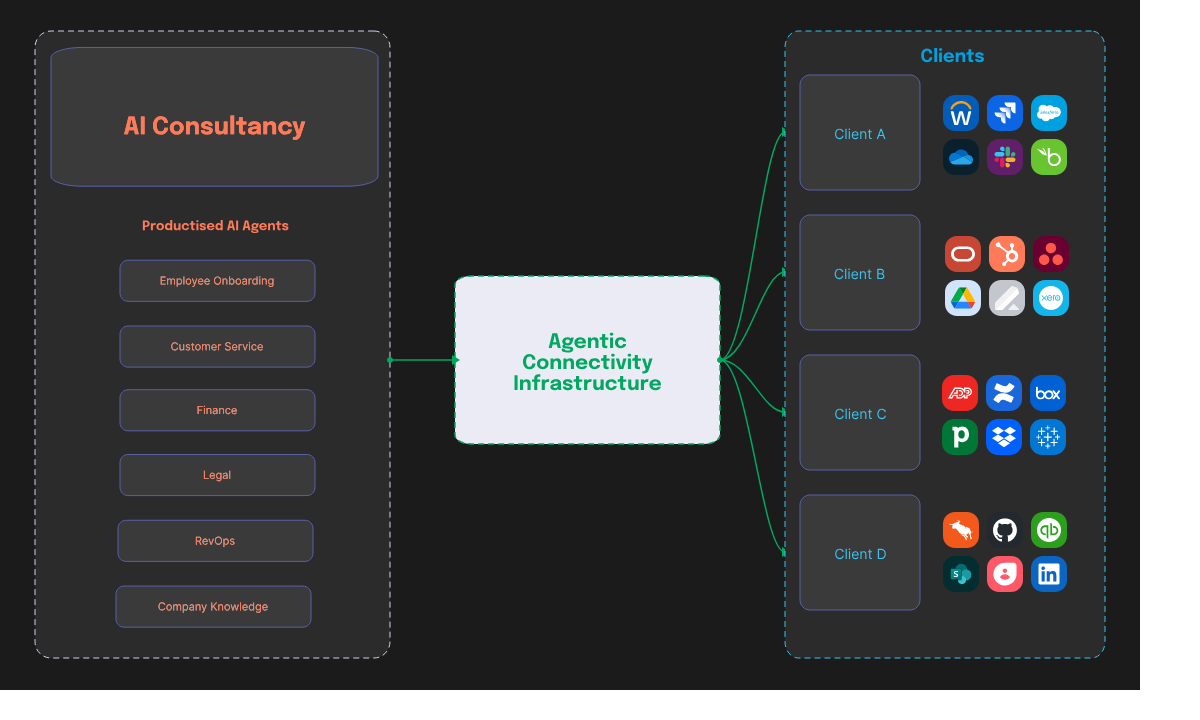

It would be easy to frame the connectivity challenge as a cost — another engineering hurdle to clear before the “real work” of building agents can begin. That framing misses the point entirely.

The complexity of enterprise agentic connectivity is itself the revenue opportunity.

Every client’s unique requirements — custom integrations, bespoke data models, compliance constraints, legacy systems, industry-specific workflows — create ongoing work that only a consultancy with the right expertise and tooling can deliver. BCG’s research found that 74% of enterprises have yet to show tangible value from their AI investments (Unframe AI). That isn’t a sign of a cooling market. It’s a market of clients waiting for someone who can actually deliver production systems that work.

The consultancy that owns the connectivity layer owns the client relationship. A successful initial agent deployment leads to substantial efficiencies, which then rolls on into new use cases, new departments, new workflows, new agent types. Connect the HRIS today, the ATS next quarter, the CRM the quarter after. Each connection deepens the relationship. Each optimisation engagement proves ROI. Each security review builds trust. The compounding effect is what turns a single project into a multi-year platform partnership and true workforce transformation project.

But this only works if the underlying infrastructure makes delivery scalable. Building integrations from scratch for every client is a margin killer. Relying on legacy middleware means fighting a battle your tools weren’t designed for. The economics only work when consultancies have an infrastructure layer that absorbs the heavy lifting — authentication, protocol management, context and token optimisation, permission handling — and lets their teams focus on the high-value strategic engagement and client-specific customisation. The most forward thinking consultancies are designing and productising agents that deliver major cost savings by autonomously automating repetitive, low-value work, which can then be ‘re-used’ by similar clients facing the same challenges. But, key to this is the connectivity layer; the first client’s Agent may have been running across Workday, Jira, Salesforce and OneDrive, what do you do when your next client with this use case is using Oracle, Hubspot, Asana and Google drive? And when this connectivity question doesn’t just pertain to four platforms but dozens or even hundreds.

The Clock Is Ticking

Over 40% of agentic AI projects are at risk of cancellation by 2027 if governance, observability, and ROI clarity aren’t established (Gartner). Meanwhile, 42% of companies scrapped most of their AI initiatives in 2025, up from 17% the year before. The window for consultancies to establish themselves as credible delivery partners for enterprise AI agents is open now — but it won’t stay open indefinitely.

The clients who get agents into production first will set the standard for their industries. The consultancies who get them there will own those relationships for years to come. Those still running perpetual pilots in 18 months will find their clients have moved on to partners who solved the hard problem: not just building agents, but connecting them to the enterprise tech stack.

StackOne is the integration infrastructure built for this moment. Recognised by Gartner as a Cool Vendor in HR Technology, backed by a $20M Series A led by GV (Google Ventures) with participation from Workday Ventures and XTX Ventures, and pioneering the agentic connectivity space with multi-protocol support, enterprise-grade connectors, AI-powered extensibility, and the multi-client management capabilities that consultancies need to scale — StackOne exists so your practice can stop rebuilding plumbing and start delivering production AI Agents.

Connect. Optimise. Secure. Three pillars. One infrastructure layer. The foundation your AI practice needs to get out of the lab and into production — before your competitors do.

“What impressed us most about StackOne is its ambition and clarity. They’re creating infrastructure that modern software and the entire AI agent ecosystem can rely on. The depth of secure integrations, the pace of delivery, and the team’s foresight into AI’s future uniquely position StackOne to redefine this category.”

Google Ventures

To learn more about how StackOne’s integration infrastructure can power your AI consultancy practice, visit stackone.com or get in touch with our partnerships team. For a deeper look at Gartner’s latest research on why the integration layer is make-or-break, read Gartner says 60% of AI agent deployments will fail — the reason is integration.