Richard South · · 10 min

Richard South · · 10 min

Gartner Says 60% of AI Agent Deployments Will Fail — The Reason Is Integration

Table of Contents

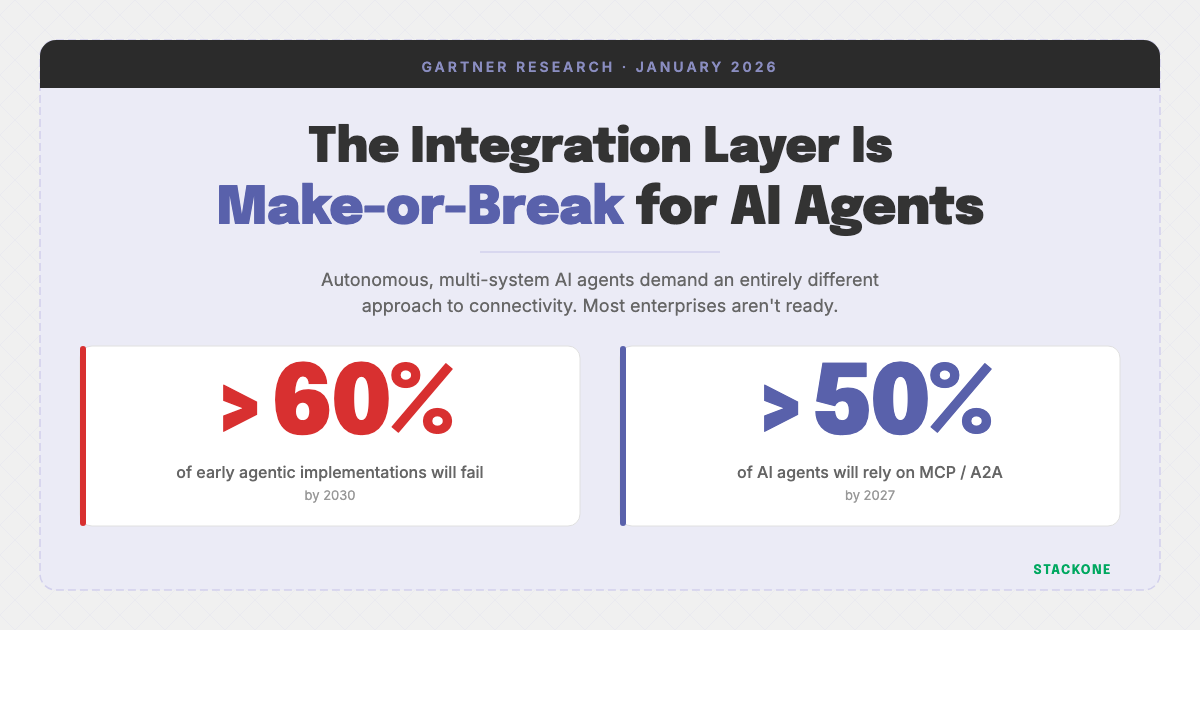

Gartner’s January 2026 research is clear: the integration layer is the single biggest factor determining whether enterprise AI agents succeed or fail. Here’s what the report says — and what it means for your architecture.

Gartner’s latest research, How to Enable Agentic AI via API-Based Integration (January 2026), makes one thing unambiguous: the era of bolted-on chatbots is over. What’s replacing it — autonomous, multi-system AI agents — demands an entirely different approach to connectivity. And most enterprises aren’t ready.

Two predictions from the report frame the stakes clearly.

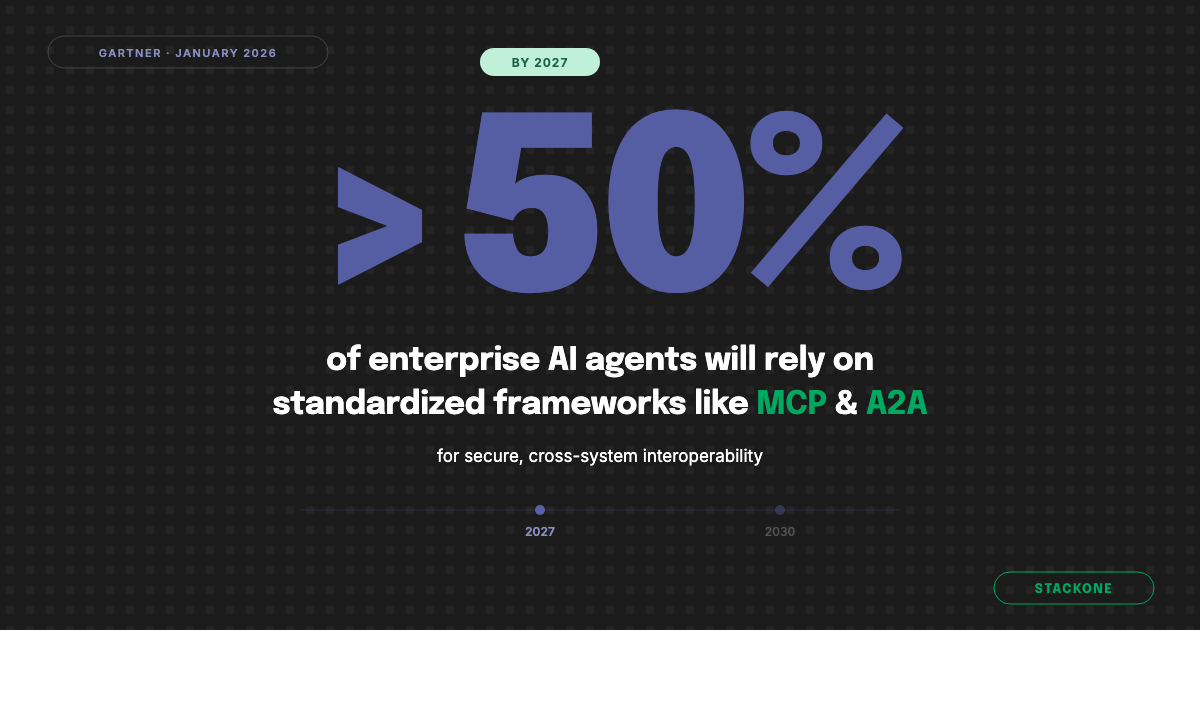

By 2027, over 50% of AI agents deployed in enterprises will rely on standardized frameworks like MCP or the Agent2Agent (A2A) protocol for secure, cross-system interoperability.

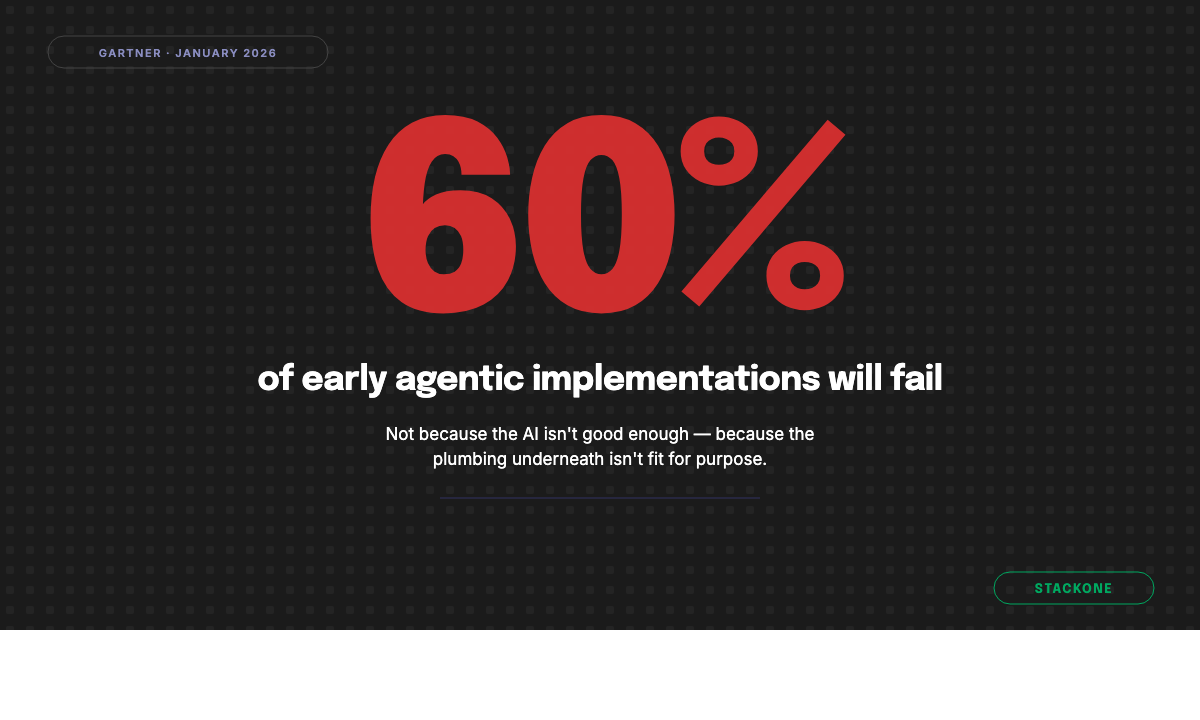

By 2030, more than 60% of early agentic orchestration implementations will fail to meet performance or cost expectations, because enterprises will underestimate the integration, governance, and talent requirements needed to make digital workforces reliable at scale.

That second number is scary. The majority of early movers will fail — not because the AI models aren’t good enough, but because the plumbing underneath them isn’t fit for purpose.

Let’s dive into what Gartner has to say about this and explore how it aligns with the next generation integrations infrastructure for AI agents that StackOne has been building.

This Is About Real Agents, Not Chatbots

Gartner draws a sharp line in this report. The first wave of enterprise AI was defined by assistants that sat alongside existing workflows — shallow tools with no real autonomy. The current era demands deep integration, where software is empowered to act, reason, and collaborate as a proactive partner in core business processes.

That distinction matters. A chatbot answers questions. An agent investigates a fraud alert, cross-references data across your HRIS and finance systems, escalates to a human when confidence is low, and logs the outcome — all autonomously. The foundational models already have these reasoning capabilities but turning them into enterprise-grade production agents requires next-generation inter-connectivity that’s real-time, permission-aware, and protocol-native.

If your AI stack still treats integrations as an afterthought, you’re not building agents — you’re building demos.

The Hybrid Connectivity Mandate: MCP + Deterministic APIs

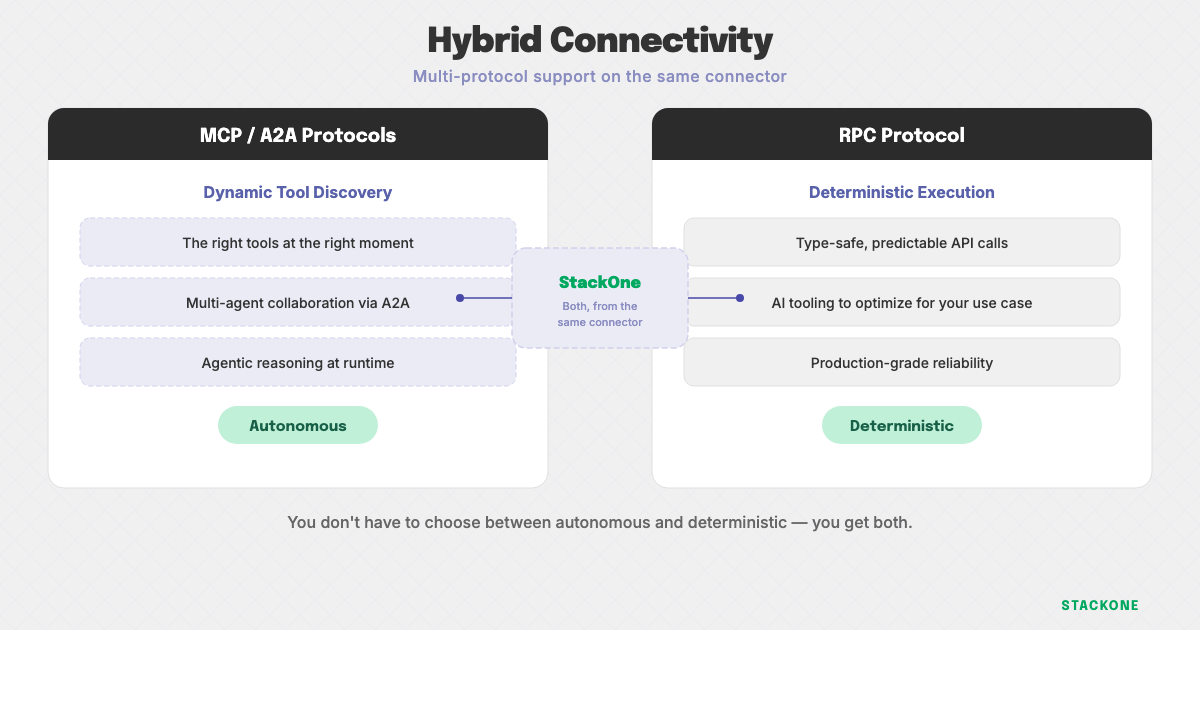

One of the most actionable recommendations in the Gartner report is the call for a hybrid connectivity strategy: use MCP for dynamic tool discovery in uncertain environments, while relying on standardized APIs for deterministic consistency.

This is precisely the architecture StackOne connectors are built around.

All of our Connectors support the MCP protocol (and include managed MCP infrastructure) for agentic discovery and reasoning as well as the RPC protocol for deterministic execution. You don’t have to choose between reliability and flexibility — you get both, from the same connector. We explore this architecture in depth in MCP vs SDK: why you need both.

Gartner also highlights the importance of the Agent2Agent (A2A) protocol for multi-agent collaboration. StackOne supports A2A natively, on the exact same connectors, meaning your agents can coordinate across systems and with each other using open, standardized protocols — not proprietary glue.

Model Swapping Without Rewiring

The report is explicit about vendor lock-in risk: walled-garden platforms offer speed but limit flexibility. Their recommendation? Maintain an API-first architecture that allows for model swapping.

This is a core design principle at StackOne. Because our connectors are model-agnostic and protocol-native, you can swap the reasoning engine — from GPT to Claude to Gemini to an open-source model — without touching a single integration. The connectivity layer stays stable. The intelligence layer evolves freely.

Code-First Frameworks Are the Engine Room

Gartner names LangGraph, Microsoft Agent Framework, and CrewAI as the go-to code-first frameworks for complex, nonlinear orchestration. These are the tools engineering teams reach for when low-code platforms can’t handle the logic.

StackOne connectors work natively with all three of those — plus LangChain, Anthropic SDK, Vercel AI SDK, Google ADK, and more. Our framework guides are designed to get you from zero to integrated agent in days, not months. The point isn’t to replace your orchestration layer — it’s to make sure connectivity never becomes a bottleneck for your Agents’ capabilities. Explore our full connector library to see what’s available out of the box.

Targeted Agent Execution and the Context Window Problem

Gartner introduces the concept of the “Back end for Agent” (BFA) pattern — deploying targeted MCP servers that expose only the specific tool metadata and permissions required for a given agent’s mission. The goal: prevent context window overload and improve reasoning accuracy.

This resonates deeply with what we’ve been writing about. When agents are given access to dozens of overlapping tools, their reasoning degrades. They hallucinate tool calls. They burn tokens trying to disambiguate which API to use. We sometimes refer to this as agent suicide by context — and it’s one of the most common failure modes in production agent deployments.

StackOne addresses this directly through scoped MCP servers and intelligent tool search that surface only the connectors relevant to an agent’s current task. Combined with our agent context architecture, this means your agents get exactly the tools they need — nothing more, nothing less.

Permissions-Aware RAG and Least Privilege

The report’s section on delegated identity and governance is critical. Gartner calls for least privilege and RBAC — enforcing user-scoped tokens and fine-grained authorization to set limits on what agents can access and do.

StackOne’s new Permissions API brings this principle directly into the retrieval layer to enable Agentic RAG that respects the underlying ACL of the system the source originated from. This ensures that when an agent searches across ingested documents, knowledge bases and more, it’s able to first query the ACL of the underlying source for the user’s actual permissions. For a deeper look at how prompt injection attacks threaten production agents, see our analysis of indirect prompt injection defenses for MCP tools.

Real-Time Data or Nothing

Gartner doesn’t mince words on this one: AI agents cannot reason effectively on day-old data. Their recommendation is to rework batch-based systems into real-time, event-driven architectures.

StackOne is an entirely real-time platform. Every connector delivers live data — not cached snapshots, not overnight syncs. When an agent queries your ATS for open roles, your HRIS for employee records, or your LMS for completion data, your CRM for open opportunities, or your Slack channels for recent messages, it’s working with the current state of the world. For autonomous agents making decisions and taking actions, anything less is a liability.

The 60% Failure Rate Is an Integration Problem

Let’s come back to that sobering Gartner prediction: more than 60% of early agentic implementations will fail. The reason isn’t bad models or poor prompting. It’s that enterprises will underestimate the integration, governance, and optimization requirements.

This is the gap StackOne exists to close. We handle the connectivity layer — real-time, permission-aware, protocol-native, framework-agnostic — so your engineering team can focus on the orchestration logic and business outcomes that actually differentiate your agents.

If you’re building enterprise agents either for yourself or on behalf of your clients, or if you’re a B2B SaaS vendor aspiring to add truly Agentic capabilities to your platform, getting the connectivity layer right is what will ensure you’re in the 40% who succeed not the 60% who fail.

The Gartner report provides the blueprint. StackOne provides the foundation to build on it. StackOne was recognised by Gartner as a Cool Vendor in HR Technology — and if you’re a consultancy building agents for clients, read how AI consultancies can get their clients’ agents out of pilot purgatory.

The Gartner report referenced in this post is “How to Enable Agentic AI via API-Based Integration” (ID G00843944, 10 January 2026) by Adrian Leow, Mark O’Neill, et al. GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally.